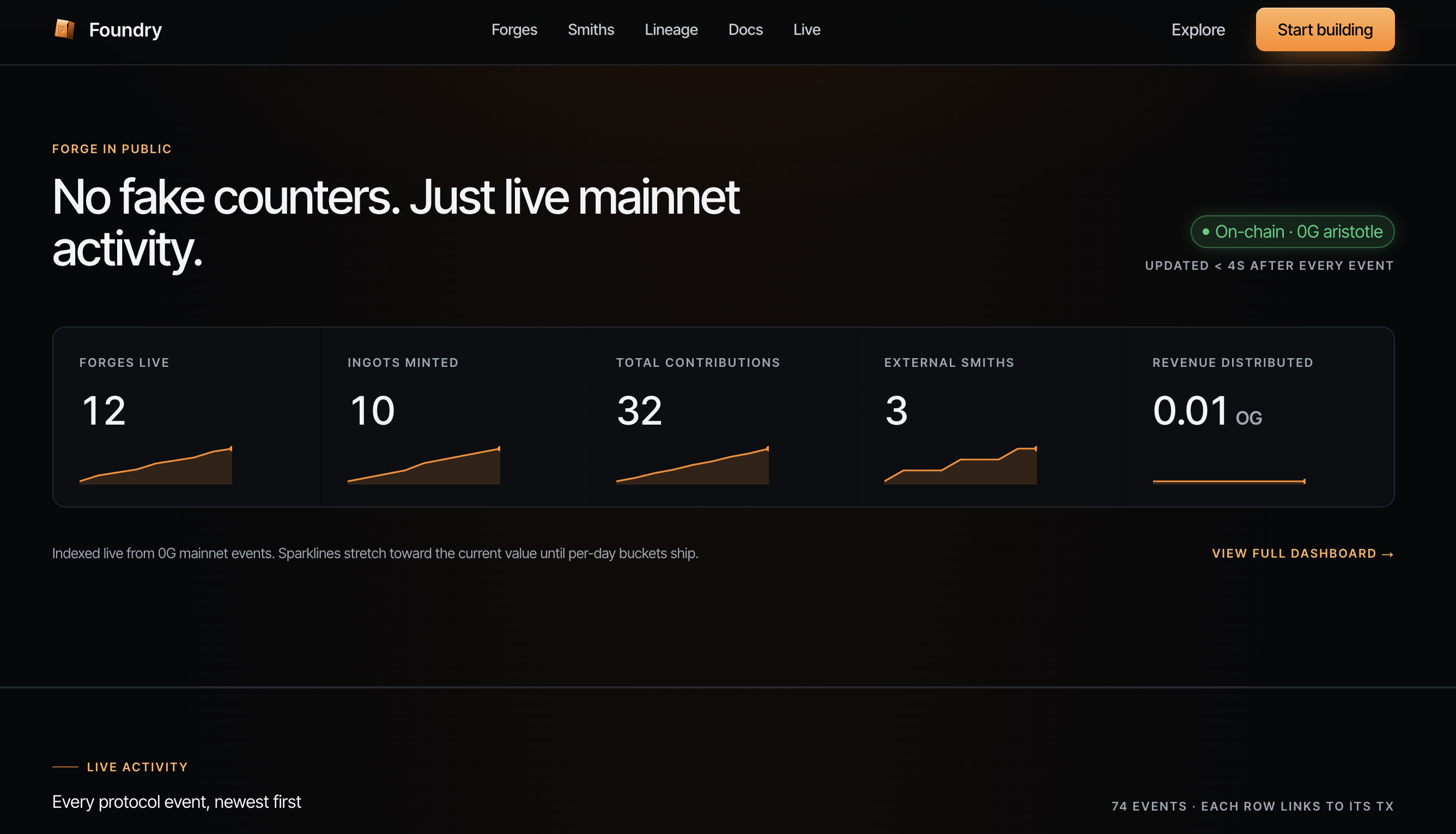

Foundry Protocol turns AI models into co-owned on-chain assets. Pool data, compute & capital into a Forge, get proportional ownership, and earn revenue from every inference. Live on 0G Aristotle.

Foundry Protocol — the ownership and revenue layer for AI on 0G

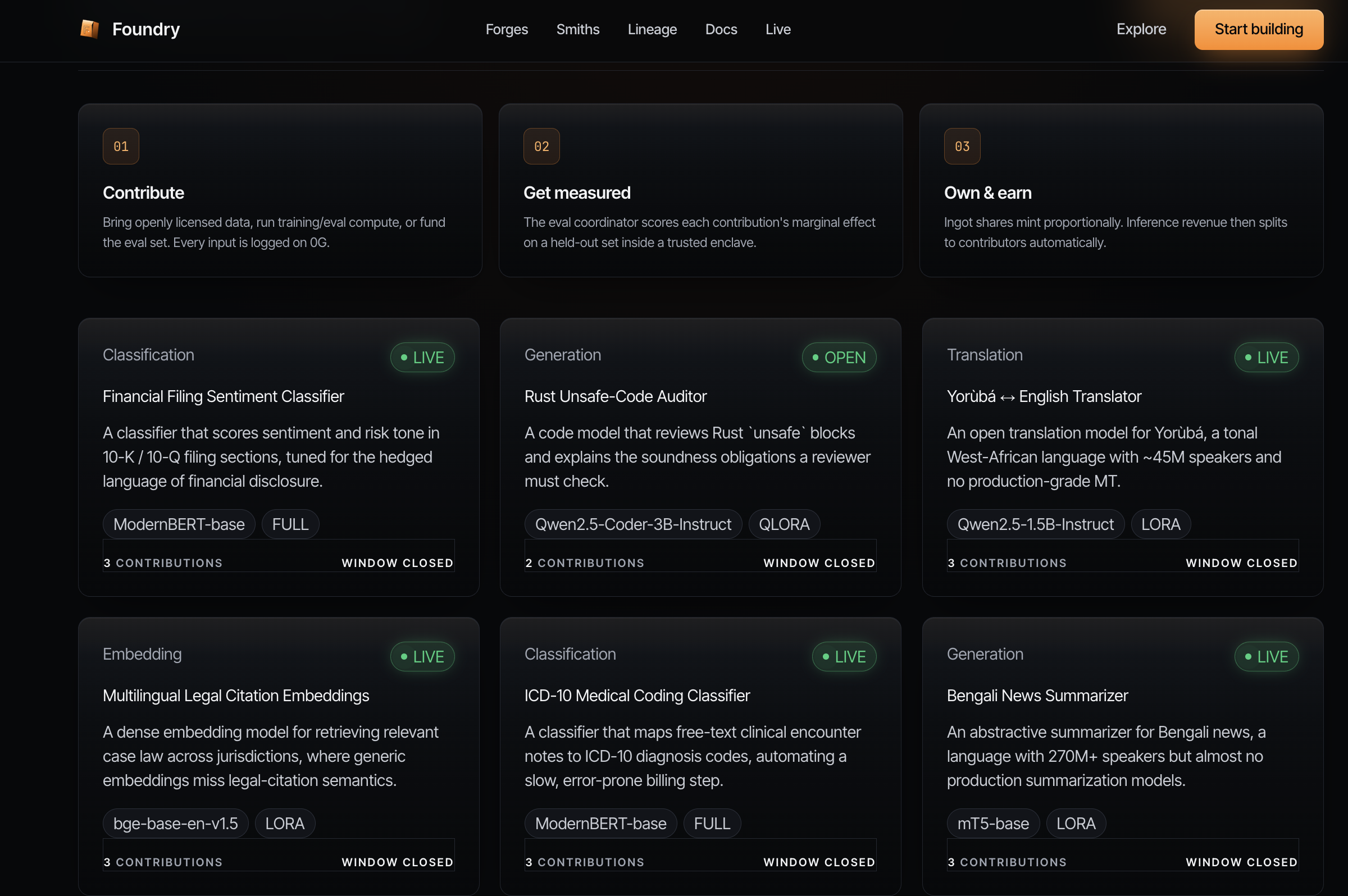

Foundry Protocol turns AI models into on-chain assets that the people who actually build them own and earn from. Today, anyone can use a model — but the data providers, compute suppliers, and funders who make it possible capture none of the upside. Foundry fixes that.

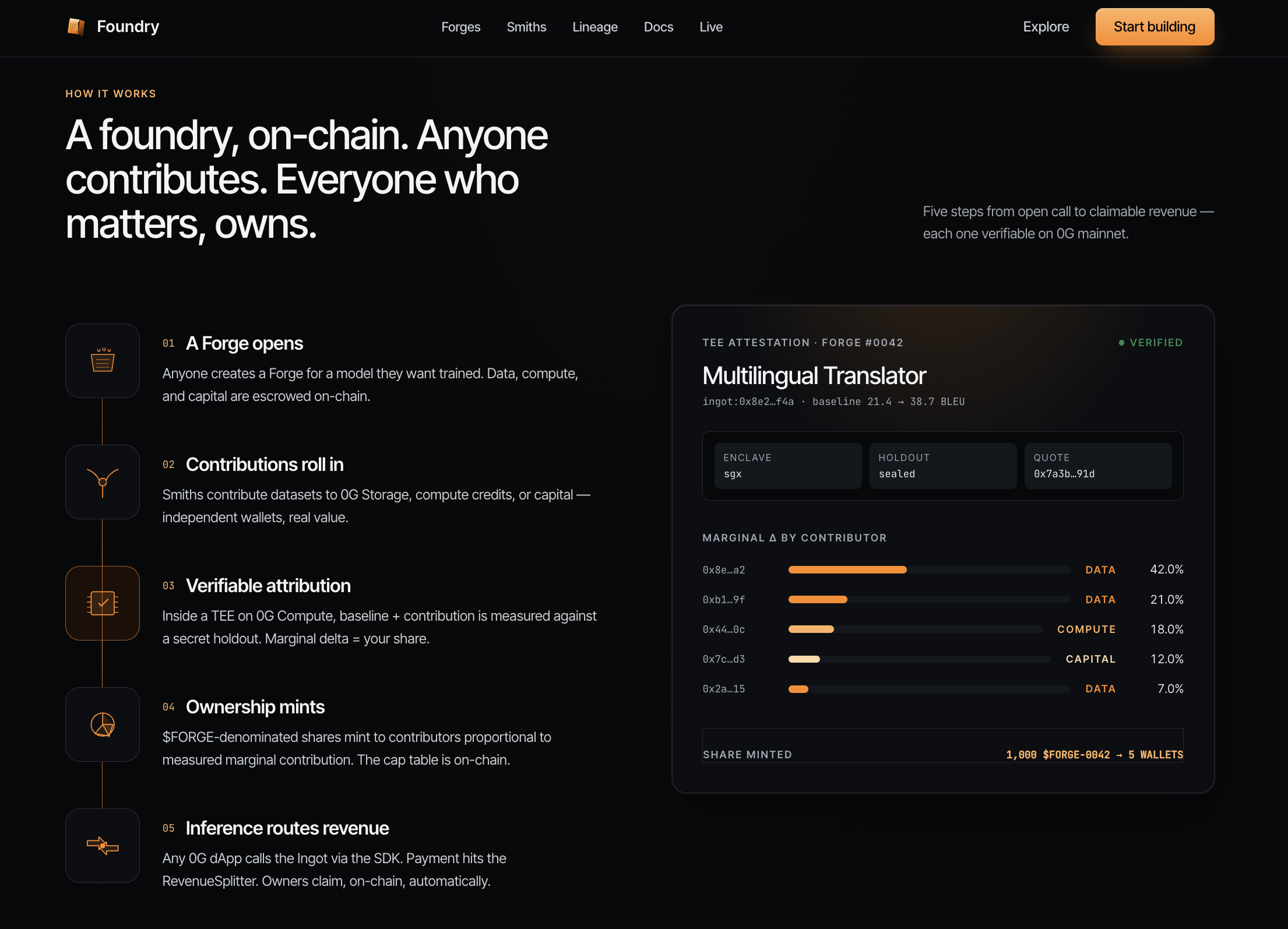

Anyone can open a Forge: a pool of data + compute + capital assembled to co-train a real AI model. Every contributor receives proportional on-chain ownership in the form of an Ingot — an ERC-721 cap table for that model. From then on, every single inference automatically routes revenue back to co-owners through an on-chain RevenueSplitter. No middleman, no off-chain trust, no bridges, no manual payouts.

The entire stack runs natively on 0G:

0G Storage holds training datasets and model weights, addressed by content root hash.

0G Compute TEEs perform training and inference with hardware attestation, so every result is verifiable.

0G Chain (Aristotle mainnet, chain 16661) settles ownership minting and per-inference revenue distribution.

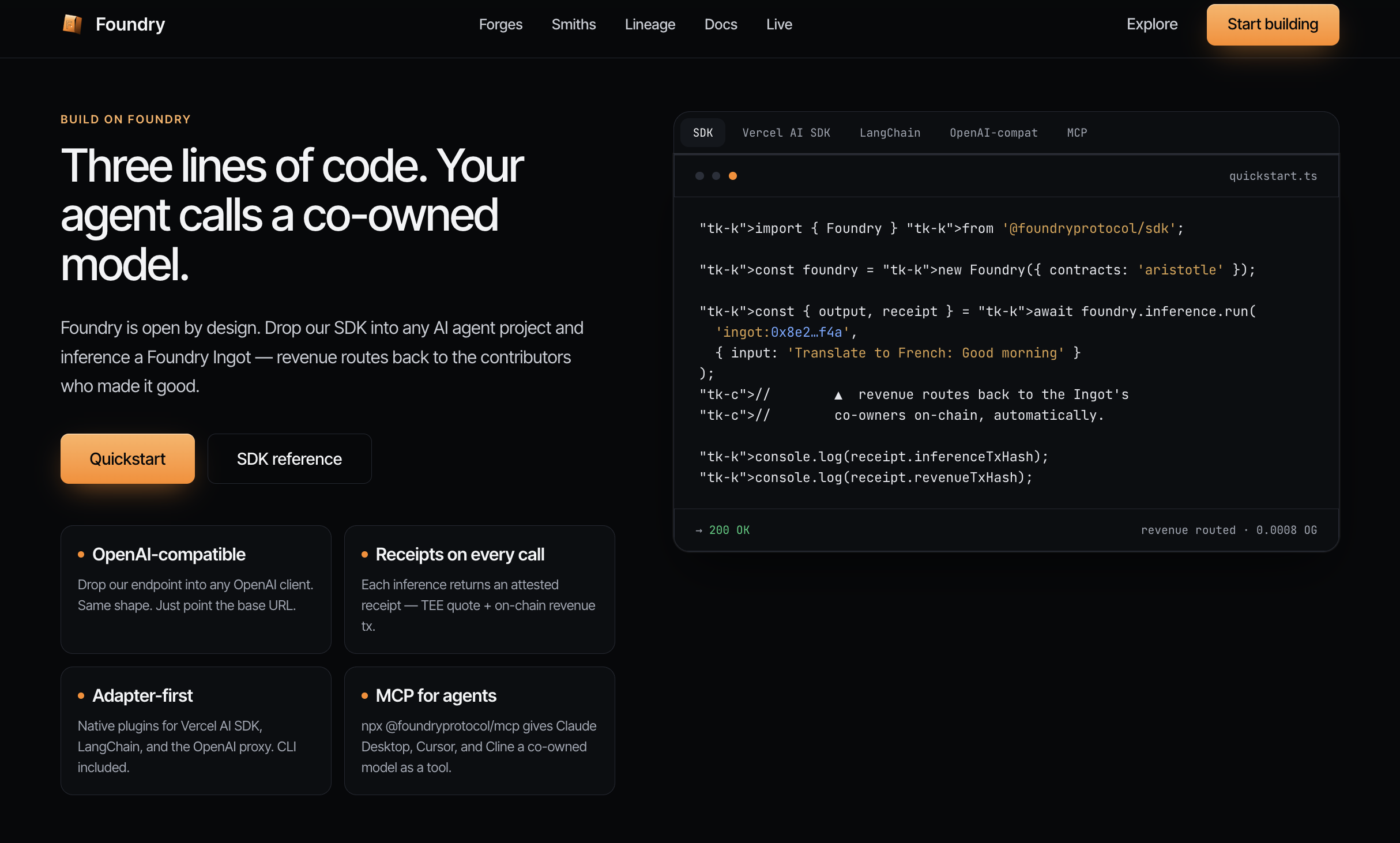

Integration is genuinely 3 lines — via the @foundryprotocol/sdk SDK, a drop-in OpenAI-compatible HTTP endpoint, or an MCP server. Any existing AI app (Vercel AI SDK, LangChain, Claude, Cursor, Cline) can switch to a co-owned model with zero rewrite. Every inference returns a TEE-attested, verifiable receipt: requestId, inferenceTxHash, revenueTxHash, latencyMs.

Why this matters for the 0G ecosystem:

It makes 0G the home of model ownership. No other chain has a primitive where training a model mints a tradable, revenue-bearing on-chain asset. Foundry makes "own the model, not just rent the API" a 0G-native capability.

It drives real, recurring on-chain activity. Every inference is a 0G Chain transaction (inferenceTxHash + revenueTxHash) and a 0G Compute TEE job — so usage compounds into measurable Storage, Compute, and Chain demand instead of one-off deploys.

It turns every other 0G project into a stakeholder. Agent, memory, DeFi, and infra teams already produce the data and compute Forges consume. Foundry lets them contribute what they already run and become co-owners — a built-in network effect that keeps builders inside the 0G economy.

It composes, it doesn't compete. Foundry is pure ownership + revenue infrastructure. Other 0G projects plug into it as their model backend and inherit verifiable receipts and a passive revenue rail for free.

Live site: https://foundryprotocol.xyz · API: https://api.foundryprotocol.xyz/v1/chat/completions

Foundry Protocol was built entirely from scratch during this hackathon — no prior codebase, no forked starter. We shipped the full vertical, from Solidity contracts to a live product on 0G Aristotle mainnet, through several rounds of iteration.

Iteration 1 — Core ownership primitive. Designed and wrote the contract suite from zero: ForgeFactory, ContributionRegistry, Ingot (ERC-721 model cap table), and IngotRegistry. Proved out the central idea — pooling data + compute + capital and minting proportional on-chain ownership.

Iteration 2 — Revenue and economics. Added FORGEToken and the on-chain RevenueSplitter, closing the loop so that every inference automatically distributes revenue to Ingot co-owners with no manual payout. This is what turned Foundry from "a registry" into "an economy."

Iteration 3 — 0G-native integration. Wired the full lifecycle into 0G: datasets and weights to 0G Storage (content-root addressed), training/inference into 0G Compute TEEs with attestation, settlement on 0G Chain (Aristotle, chain 16661). Every inference now returns a verifiable receipt (inferenceTxHash, revenueTxHash, attestation).

Iteration 4 — Developer surface. Built three integration paths so any team can adopt it in minutes: the @foundryprotocol/sdk TypeScript SDK, an OpenAI-compatible HTTP API, and an MCP server (list_ingots, run_inference, get_ingot, get_lineage, get_attestation), plus drop-in Vercel AI SDK and LangChain adapters.

Iteration 5 — Product, content, and polish. Shipped the live web app — dashboard, forge ledger, Ingot detail views, lineage graph, AI forge wizard, and TEE attestation viewer — full developer docs, and a real live Ingot catalog (5 co-owned models, 4–9 contributors each: Konkani↔English, Konkani news, Tulu↔English, Clause Classifier, MSA-clause).

Final version — deployed and live on 0G Aristotle mainnet (chain 16661):

ContributionRegistry 0x05235Ba0F2a77bcaB87371E4d797D6830ddC2d86

Ingot 0x39B736f424754d05a0da186d89015b74d1DDe1d3

RevenueSplitter 0xC58E0F32BD43e43153D3CA8ee8F25C8198789289

ForgeFactory 0x636109264EBF6cFD18CC38bD43eDf9cCad7ae23D

IngotRegistry 0xF8f3fAE648A8d7ee4Df0A7b10a0F759938aab7e1

The result is a complete, working product — not a prototype: a co-owned model can be forged, deployed, served, and paid out end-to-end on 0G today.

NA