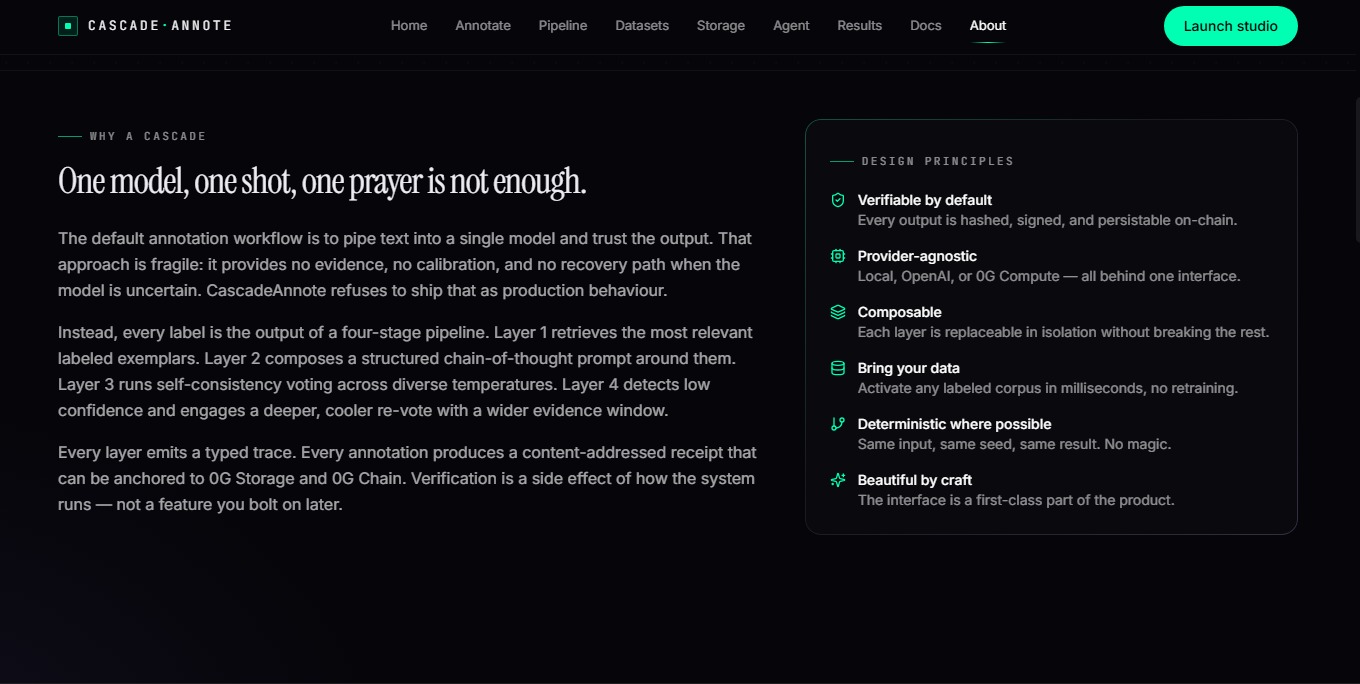

## The Problem

Standard AI annotation pipelines pipe text into a single model and

trust the output blindly. This approach is fragile — it provides no

evidence, no calibration, and no recovery path when the model is

uncertain. Labels produced this way are unverifiable and untraceable.

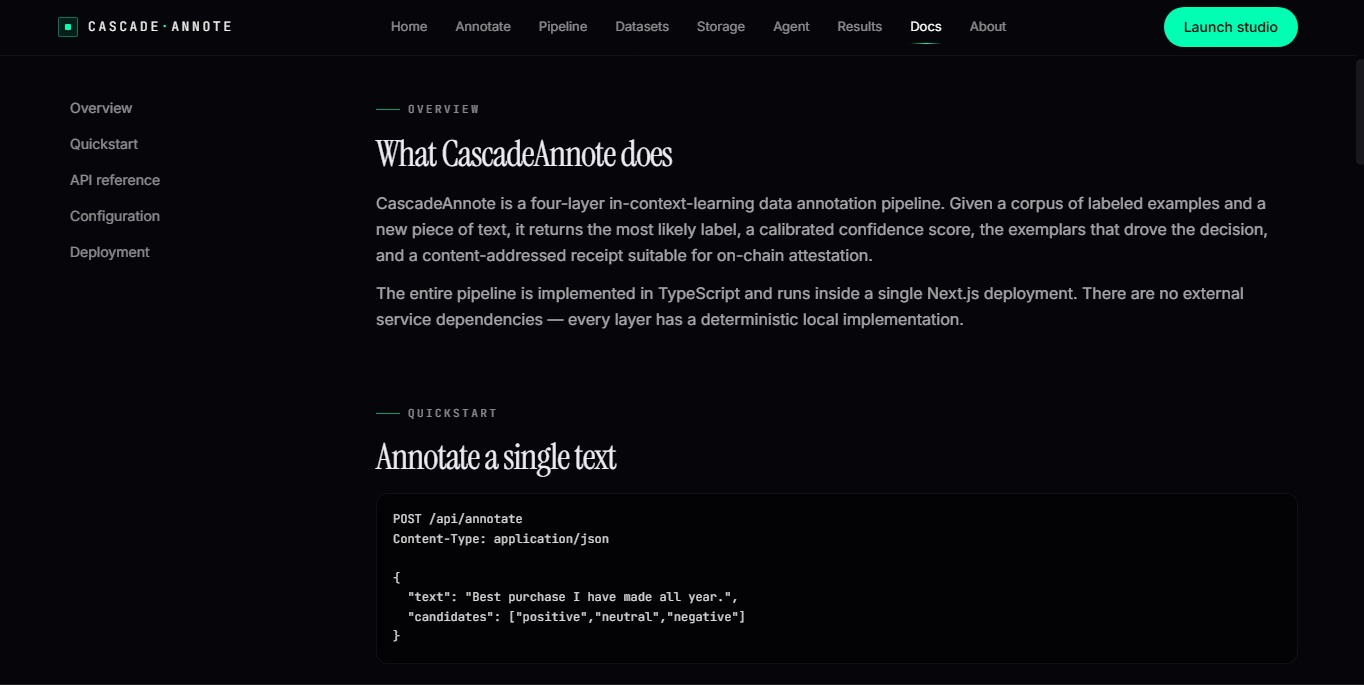

## The Solution — CascadeAnnote

CascadeAnnote treats every label as a falsifiable claim backed by

evidence. It runs every annotation through a 4-layer cascade:

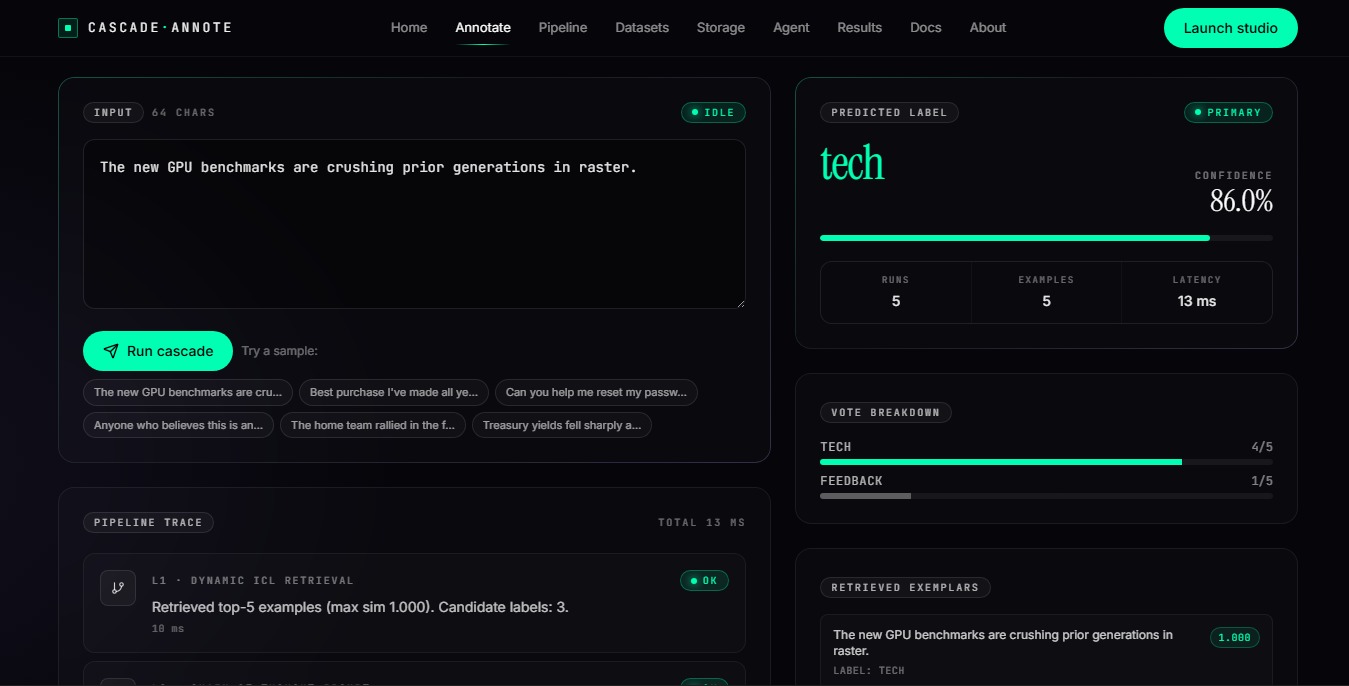

L1 — Dynamic ICL Retrieval

TF-IDF + cosine similarity retrieves the most relevant labeled

exemplars from the corpus in real time.

L2 — Chain-of-Thought Reasoning

A structured 5-step CoT prompt builds an evidence-backed reasoning

trace before committing to a label.

L3 — Self-Consistency Vote

5 independent inference runs at varied temperatures produce a

majority-voted label with a calibrated confidence score.

L4 — Adaptive Fallback

If confidence falls below threshold, the system automatically widens

the evidence window and re-votes at cooler temperatures.

## 0G Infrastructure Integration

CascadeAnnote is natively built on 0G's modular stack:

- 0G Storage — every annotation is SHA-256 hashed and uploaded

to the 0G indexer, producing a verifiable rootHash + txHash receipt

- 0G Compute — Layer 3 inference can be routed through 0G Compute

for fully on-network verifiable inference

- 0G Chain — a stable did:0g agent identity is derived per

deployment; receipts are anchored under this DID

## Tech Stack

- Next.js 15 + TypeScript + Tailwind CSS

- Pure TypeScript engine — no GPU, no heavy ML dependencies

- Provider-agnostic: Local / OpenAI / 0G Compute

- Single Vercel deployment — fully serverless

## Links

- Live Demo: https://cascade-annote.vercel.app

## What We Built During the Hackathon

Week 1 — Architecture & Core Engine

Designed the 4-layer cascade pipeline architecture. Implemented L1

retrieval engine using TF-IDF with unigram + bigram indexing and

cosine similarity scoring. Built the seed corpus with 60 labeled

examples across 4 label families (sentiment, topic, intent, toxicity).

Week 2 — Inference & Voting

Built the chain-of-thought prompt builder (L2) with a 7-strategy

label extractor. Implemented the self-consistency voter (L3) with 5

inference runs at temperatures [0.3, 0.7]. Integrated local ICL

classifier, OpenAI, and 0G Compute as provider-agnostic backends.

Week 3 — 0G Integration & Verifiability

Integrated 0G Storage adapter — every annotation is SHA-256 hashed

and uploaded with a verifiable rootHash + txHash receipt. Implemented

0G Chain agent identity (did:0g DID) and 0G Compute inference routing.

Built the adaptive fallback layer (L4) with confidence thresholding.

Week 4 — Frontend & Deployment

Built 9 frontend pages (annotate studio, pipeline explorer, dataset

uploader, storage receipt explorer, agent identity, results dashboard).

Deployed as a single Next.js 15 serverless app on Vercel.

## Current Status

✅ Fully deployed and live at https://cascade-annote.vercel.app

✅ All 4 pipeline layers operational

✅ 0G Storage receipts working

✅ Batch annotation API (up to 50 texts per call)

✅ CSV corpus upload and activation

Not yet fundraised. CascadeAnnote is currently bootstrapped and

self-funded as an independent open-source project. We are open to

grants, ecosystem funding, and strategic partnerships — particularly

within the 0G ecosystem — to accelerate development of the active

learning loop, multi-agent voting, and sealed inference features on

the roadmap.