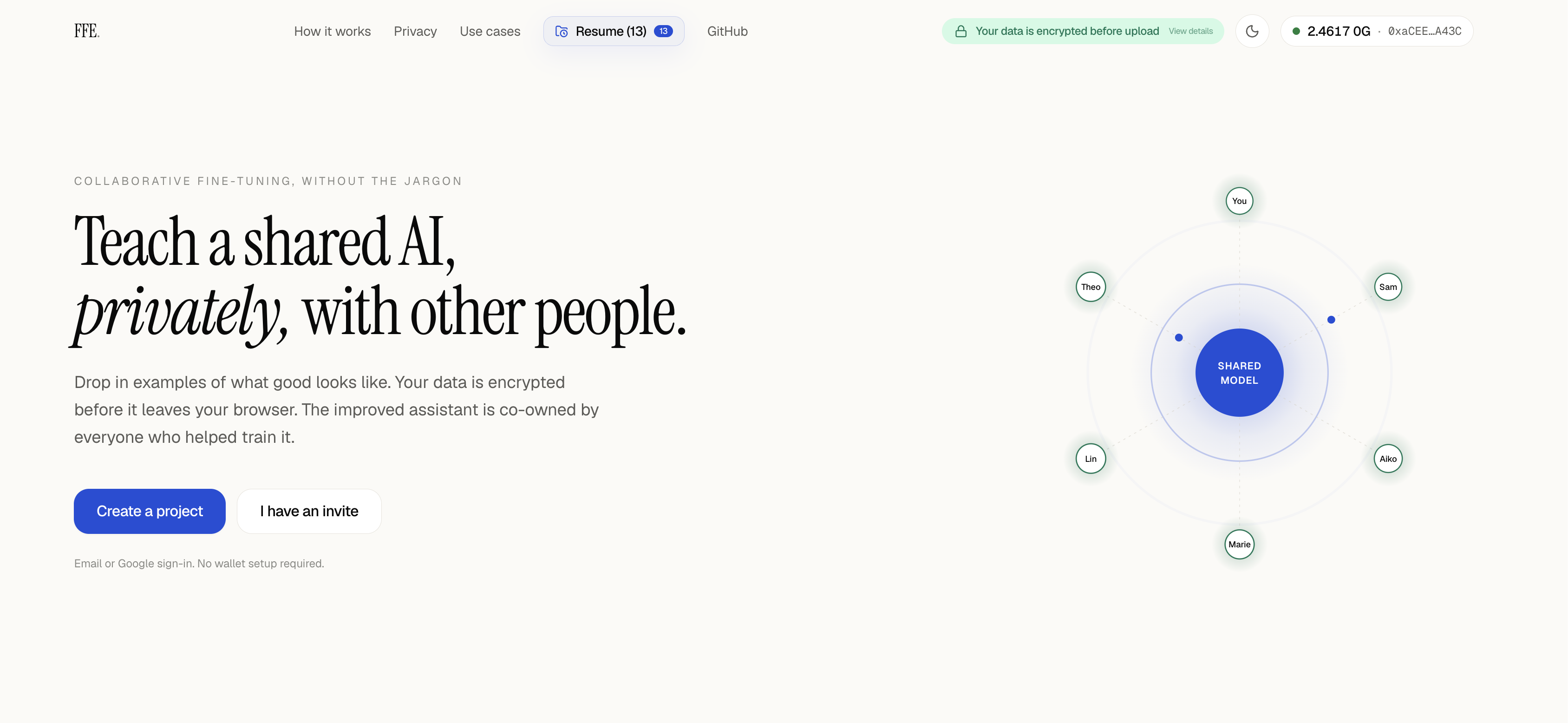

Secure Collaborative Model Fine-tuning without trust assumption

FFE extends 0G's fine-tuning from a single-user flow into a multi-contributor workflow: several parties can jointly fine-tune one shared LoRA on their

combined private datasets, without any of them ever revealing their raw data to each other or to the aggregator operator.

How it works

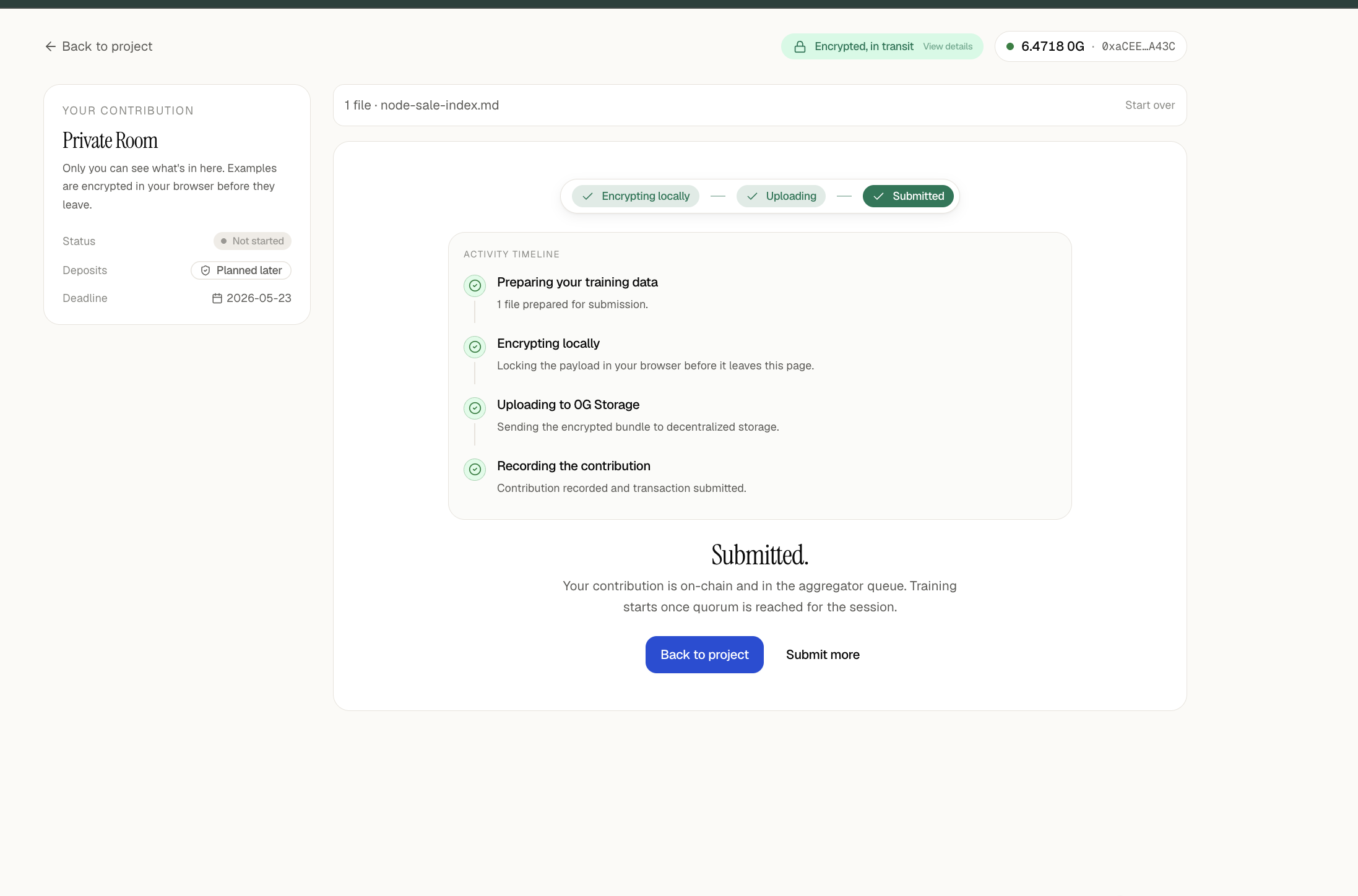

1. Contributors encrypt their JSONL datasets to the aggregator's X25519 public key and upload the ciphertext to 0G Storage. The on-chain Coordinator

contract only sees a hash commitment.

2. The Coordinator tracks the session, participants, submissions, and quorum. When quorum is reached, it emits an event.

3. The aggregator fetches the encrypted blobs, decrypts them inside its process, concatenates the JSONL, and runs real 0G Compute fine-tuning to produce

one shared LoRA adapter.

4. The LoRA is encrypted once with AES-256-GCM. The symmetric key is then sealed separately to each contributor's X25519 pubkey and stored in an

ERC-7857-style INFT alongside the encrypted model blob hash.

5. Each contributor independently unseals their copy of the key and downloads the same shared model. No contributor sees anyone else's raw data;

everyone gets the same trained artifact.

What's in the box

- sdk/ — TypeScript SDK (@notmartin/ffe): openSession, submit, download, plus crypto, storage, coordinator, and INFT modules.

- contracts/ — Solidity Coordinator and INFTMinter, deployed on 0G Mainnet.

- aggregator/ — Node service that listens for quorum, decrypts blobs, drives 0G fine-tuning, encrypts and seals the result, and mints the INFT.

Dockerized for Railway.

- frontend/ — Next.js + Privy app for project owners and contributors: solo and multi-contributor flows, invite emails, live training logs, animated

training stages, and resume-session support, with Supabase-backed project persistence.

Why it matters

Today, fine-tuning a custom model on private data is a solo activity — your dataset, your model, your silo. Real-world value often lives in combined

datasets (hospitals, research groups, indie devs, DAOs) that no single party can or will share in the clear. FFE makes that combination possible on 0G:

contributors keep their data encrypted, the aggregator never persists plaintext, and the trained model is owned jointly through an INFT with

per-contributor sealed keys.

Tech stack: 0G Chain, 0G Storage, 0G Compute (fine-tuning), Solidity, TypeScript, Node, Next.js, Privy, Supabase, X25519 + AES-256-GCM, LoRA on

Qwen2.5-0.5B-Instruct.

We built FFE (Federated Fine-tuning Extension) end-to-end during the hackathon — going from a 0G fine-tuning flow that only supported a single user

training on one private dataset, to a multi-contributor workflow where several parties jointly fine-tune one shared LoRA without ever revealing their

raw data to each other.

Concretely, what we shipped:

- Smart contracts (Solidity, deployed on 0G Mainnet)

- Coordinator (0x840C3E83A5f3430079Aff7247CD957c994076015) — manages session lifecycle, participants, submissions, quorum, and owner cancellation.

- INFTMinter (0x04D804912881B692b585604fb0dA1CE0D403487E) — ERC-7857-style INFT storing the encrypted LoRA blob hash and a sealed AES key per

contributor.

- TypeScript SDK (@notmartin/ffe) — openSession, submit, download, plus crypto, storage, coordinator, and INFT modules. Includes a LoRA comparison tool

to inspect training metadata and weight diffs between single-user and federated runs.

- Aggregator service (Node/TS, Dockerized for Railway deploy)

- Event listener polling Coordinator for quorum.

- Blob processor that fetches encrypted JSONL from 0G Storage and decrypts with the aggregator's X25519 key.

- Training bridge that drives real 0G Compute fine-tuning (Qwen2.5-0.5B-Instruct via provider 0x940b4a101CaBa9be04b16A7363cafa29C1660B0d).

- Minter that encrypts the trained LoRA with AES-256-GCM, seals the key per contributor, uploads to 0G Storage, and mints the INFT.

- Wallet diagnostic + stuck-task recovery scripts and live training-log streaming.

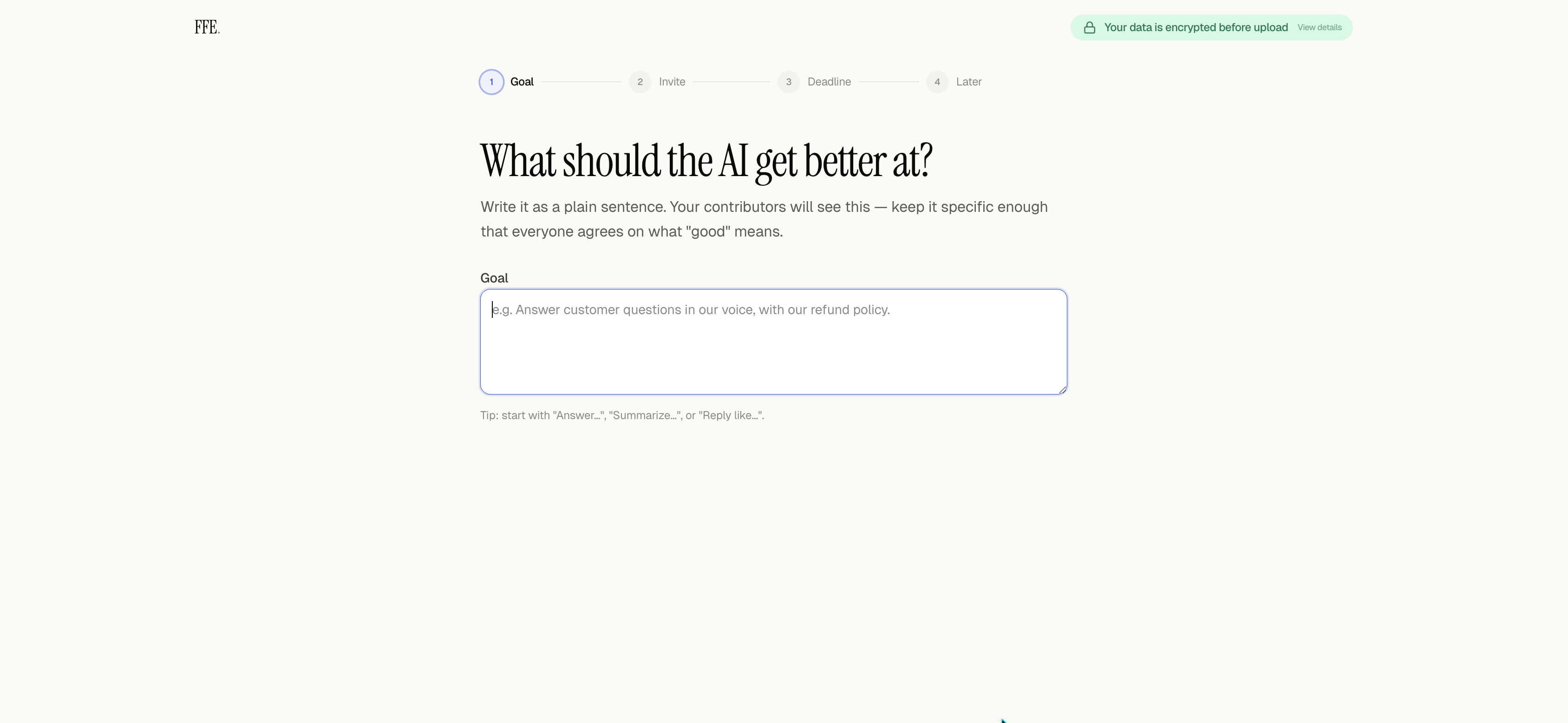

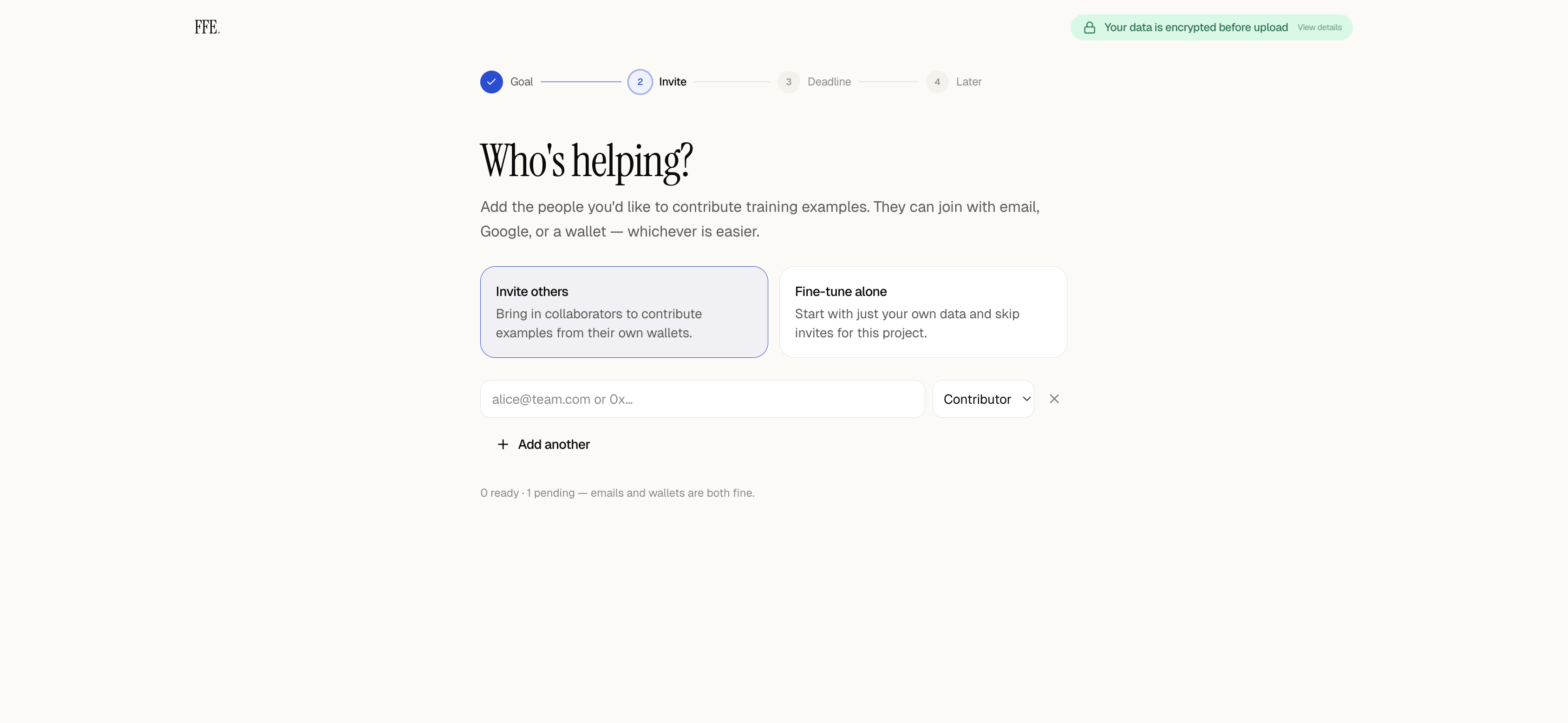

- Frontend (Next.js + Privy)

- Solo and multi-contributor project setup flows.

- Contributor pre-registration, invite emails, Supabase-backed project persistence, and resume-session drawer.

- Animated training stage UI with live aggregator logs, upload timeline, and owner-side cancellation.

- Deferred on-chain session creation until submit, to avoid wasted gas on abandoned drafts.

- Remotion-based intro composition.

- Cryptographic guarantees — contributors encrypt to the aggregator's X25519 pubkey; the Coordinator only stores blob-hash commitments; the trained LoRA

is encrypted once and the AES key is sealed independently for each contributor, so each party can decrypt the same shared model without trusting the

others.

- End-to-end live run — a real federated session on 0G Mainnet that combined multiple contributor datasets, trained a shared LoRA via 0G Compute, minted

the INFT, and let each contributor download and decrypt the same model artifact.

TL;DR: we took 0G fine-tuning from N=1 → N>1, privately, on mainnet, with contracts + SDK + aggregator + frontend all working together.

None for now